NB: In many contexts, AI and ML overlap but are distinct. In this post, I’m using them basically synonymously and completely interchangeably. Feel free to find/replace them all w/ the acronym of your choosing for a more pleasant reading experience.

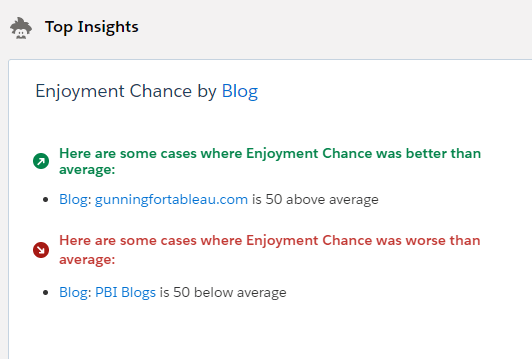

Tableau has just released an integration with Einstein Predictions, and there’s a ton to be explored and celebrated with that. It’s the first formal integration between Tableau and Salesforce stacks since the acquisition, it’s the easiest AI/ML in any BI product around, and it truly lowers the barrier to entry for people who know nothing about R, Python, etc. And it surfaces some great insights!

But as we all know, with great power comes great responsibility. ML has the power to find new insights in our data, find new ways to optimize processes, maximizing profit and minimizing cost. It also has the potential to augment some of our worst flaws and augment existing biases. I recently re-read Cathy O’Neil’s phenomenal book “Weapons of Math Destruction” on the risks we take with ML, and it feels incredibly relevant to this situation. Many in the traditional Tableau userbase may have little experience with ML up until now (yes, we do love TabPy), so it’s worth highlighting some of her advice through a Tableau lens. Consider this my own take on her book, which you should read!

The author lays forth 4 potential (and historical) problems with ML, and I’ve added on two of my own, along with my suggestions on how to approach each. Each of these, if followed, will help us create and deploy models that are not only more responsible but also more effective, removing detrimental human bias and adding efficiency wherever possible.

Her 4…

- Scalability

- Lack of Opacity

- Model Regulation

- Contestability

…and my own few…

- Confusing Optimization for Innovation

- Confusing Metrics and Targets

Scalability

This is literally the entire concept of creating citizen data scientists. We’re looking to allow more people to implement more data science in more places. It’s also the single biggest risk. Anything deployed irresponsibly can cause damage, but people’s inherent trust in AI and their willingness to “Set it and forget it” means that it can impact business processes at massive scale. O’Neil notes that the ease at which ML is scaled now (and the ease of scaling its impacts as well) means that irresponsible usage can have dangerous implication. Whether the negative impact is a social one (AI has been used to justify over-policing poor neighborhoods) or a business one (a poorly trained model could tell you to sell the wrong products to the wrong people), the ability to scale AI’s impact is also the ability to scale its potential for failure. Fear not! If attention is paid to the rest of her notes, ML can be deployed responsibly and in a helpful manner.

Opacity

Too often, ML models are trained on an entire dataset, deployed and accepted without appropriate documentation. A successful model should allow the end users to see what goes INTO it so they know they can trust what comes OUT of it. ML models are built entirely on training datasets, which are historical records. Historical records reflect our own biases in every way. These biases may be innocuous (an ML model would find that I should work harder before I’ve had my coffee) or massively impactful (ML models will reinforce histories of racism, sexism, and a whole host of -phobias). Avoiding opacity helps to build trust in your model, as well as allowing users to recommend additional variables that SHOULD be included in it. Even if we exclude the directly discriminatory elements, how many other elements correlate with those? Amazon was forced to scrap a hiring algorithm after it recommended not hiring attendees of all-female colleges. What proxies exist in your data, and how will you guard against them? Predictions helps with this in that it shows primary drivers of a prediction. Documenting the rest of your model is a key step to building trustworthy, effective models. Einstein’s ability to surface the reason for a prediction helps with accountability and transparency.

Difficult to Contest

ML models, at the end of the day, surface predictions, not sureties. They may seem similar, but it’s an important distinction. Especially when it comes to making high-impact decisions (remember that “impact” applies not only to the business, but the consumer as well), presenting AI projections as fact is irresponsible, and consumers should be protected from fully AI-based decisions.

Anecdotally, I was in southern Washington two weeks ago and we came to a cash-only toll bridge. We pulled over to an ATM to get $2 in cash. An AI system flagged our card as suspicious activity, and we spent 45 minutes on the phone with Charles Schwab just so we could be allowed access to our own money. In our case, this was harmless (we got ice cream and sat by the bridge) but automated denial of access to one’s own belongings could have serious consequences. What if there was a time-based need for the money? What if my phone was dead? Uncontestable or difficult-to-contest decisions deliver bad customer experience, can punitively impact the most vulnerable customers, and can set your AI implementation up for failure. Remember that AI is only profiling a set of dimensions, it can’t know the individual’s intent.

Optimize vs Innovate

An ML model is built to take our existing processes and tweak and hone them to perfection. Even if we deploy a model completely free of bias, at best it will only perfect our current process. To butcher a Henry Ford quote (it’s apocryphal anyway), “If we asked ML what it wanted, it would’ve optimized for faster horses”. ML isn’t here to invent the car! Allow ML to perfect your existing processes, but don’t pretend it’s a replacement for human innovation.

Use ML in tandem with what your users know about the business. Successful AI implementations in BI are a work in progress, but they’ll likely involve a balance of AI and human involvement. Allow AI to help fine-tune processes and expose wasted expenses, but allow the data consumers to find creative solutions to those problems in ways that AI can’t innovate. Better yet, put Einstein next to AskData to allow users the ability to explore the data with Einstein as a guide for which fields may be most important!

Targets vs Metrics

Don’t allow yourself to confuse a target and a metric, because once a metric becomes a public target…it loses its value as a metric. If people are trying to attain a metric, rather than the outcome that the metric measures, you’ll optimize for the wrong scenario.

Imagine I build a model seeking to maximize profit, and it tells me I should sell direct to consumer, rather than through any third parties. I then publish this as a target for my internal salespeople, with a prize for whoever sells the highest % direct to consumer. A clever salesperson will win first prize (as you know, a Cadillac Eldorado) by simply not selling anything to a third party…but ultimately that may cut down their sales by so much that they have almost 0 profit. They’ve achieved the metric, but at the cost of the target. The book details an incredible example of how Baylor has cheated an equally flawed algorithm regarding the college admission process, and how it completely invalidates the models we began with. How do you avoid this scenario?

- Ensure that the model is being pointed at the desired outcome, not something that correlates with the desired outcome.

- Ensure that people implementing policy as a result of the model understand what the model does and doesn’t recommend.

- Align incentives with real-life outcomes. The scenario above should seek to maximize profit, not direct-to-consumer sales!

Overall, ML in BI creates a huge opportunity. Allowing casual business users to train and deploy models can unclog bottlenecks in your data science department, making data science available for all sorts of projects, not just the top-line massive-budget projects. Dashboard builders can use this to influence which dimensions they should ignore, and which they should dig in on. Casual consumers of dashboards get additional context as to why the data they’re looking at is important. Web Editors and AskData folks now have a way to drive their exploration towards a target, rather than wandering aimlessly through massive data models.

At the same time, the expanded userbase means an expanded base of people responsible for deploying models. These people should be taught about AI/ML, what “intelligence” really means in that capacity, and how it can be used to harm both people and businesses. Responsible deployment of AI isn’t a one-time effort, it’s an ongoing enablement of employees and inspection of models.